Compute Governance Examples for Cloud, Data, and AI Workloads

Practical compute governance examples across cloud, data, AI, reporting, and automation workloads.

Compute Governance Examples for Cloud, Data, and AI Workloads

compute governance examples matters when compute stops being invisible infrastructure and starts shaping operational cost, reliability, and speed. The practical question is not only whether a job can run. It is whether the business can see who owns it, what it costs, why it deserves priority, and what should happen when usage spikes.

Compute Governance Examples for Cloud, Data, and AI Workloads in a real operating model

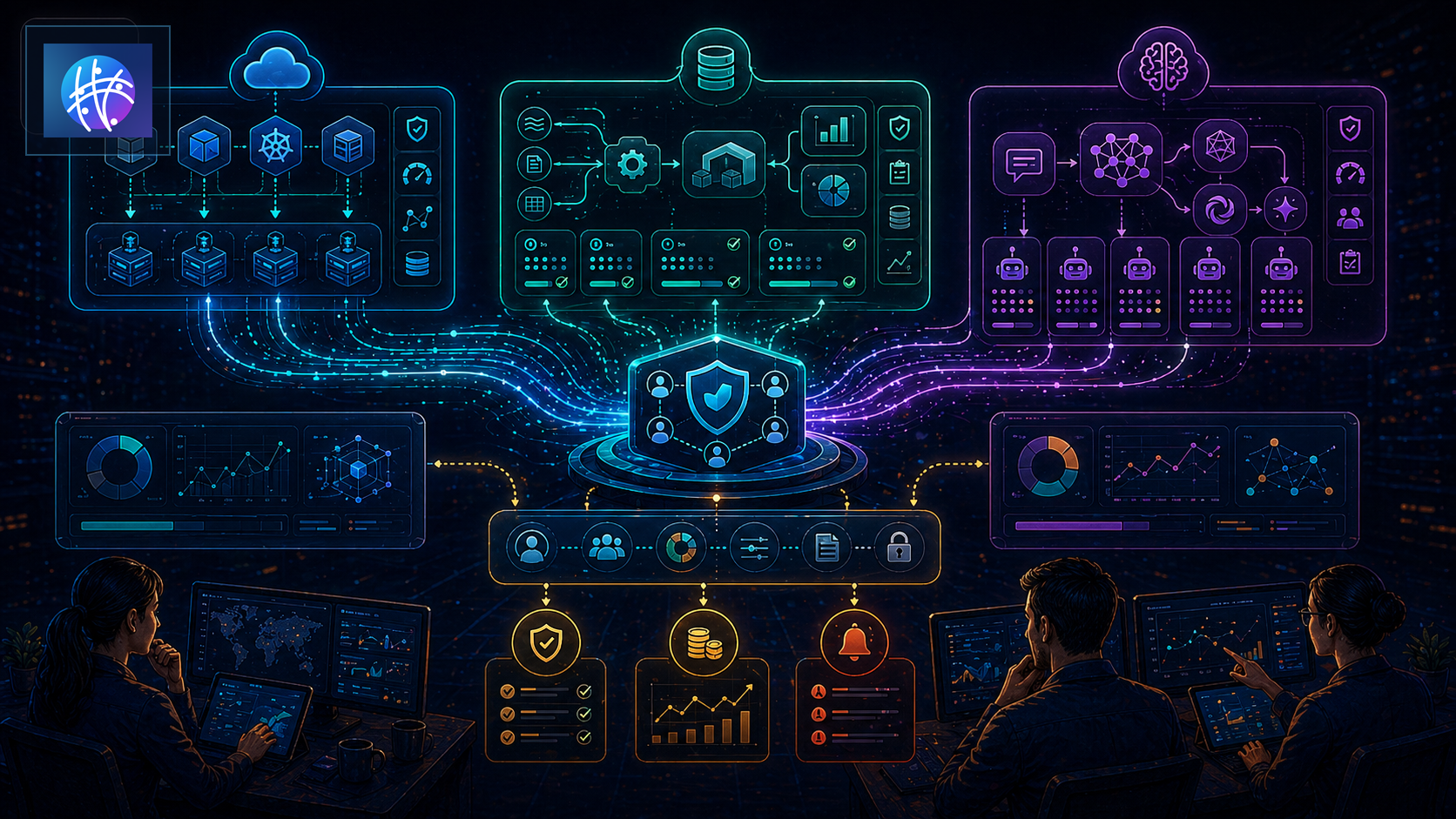

This guide focuses on compute governance examples, plus cloud compute governance examples, data workload governance, ai compute governance, compute policy examples. The practical situation is simple: cloud jobs, data pipelines, AI calls, and internal automations all consume compute in different places with different owners. If compute is tied to customer workflows, AI agents, data pipelines, or reporting refreshes, it needs governance without turning every useful workload into a ticket maze.

References like FinOps framework, cost management, and budget controls show the financial side. Operators still need the execution side: intake, classification, limits, owner routing, monitoring, exception handling, and outcome review.

Intake, class, owner, limit, and outcome

Intake captures why a workload exists. Is it a customer-facing workflow, a data refresh, an AI agent, a report, a test job, an experiment, or a background automation? The class determines how much control it needs. Production workloads deserve different limits than experiments.

Ownership answers who pays attention when compute behaves badly. The owner is not always the engineer who created the job. Sometimes finance owns budget, operations owns workflow quality, data owns pipeline freshness, and product owns customer impact. Governance fails when those roles are implicit.

Limits protect the business. Budgets, quotas, concurrency controls, rate limits, warehouse monitors, queue caps, autoscaling boundaries, and alert thresholds all prevent compute from becoming silent operational drift. Outcomes prove whether the workload still deserves the compute it consumes.

A practical workload path

Imagine cloud jobs, data pipelines, AI calls, and internal automations all consume compute in different places with different owners. A weak process lets the workload run because someone needed it once. A stronger compute governance workflow classifies the workload, tags the owner, assigns budget, defines priority, sets limits, connects monitoring, and reviews whether the output still matters.

For example, a reporting refresh that once powered a leadership dashboard may now run hourly for a report nobody opens. An AI agent may retry failed tasks until model spend rises quietly. A data pipeline may process stale records because nobody tied compute usage to business value. Governance catches those gaps before finance sees only the bill.

A worked workload review

A useful review starts with the workload record, not the invoice. Suppose a nightly data transform consumes meaningful warehouse credits. The review should show the owner, schedule, downstream reports, freshness requirement, failure history, average runtime, peak runtime, retry behavior, and business outcome. If the report is still used by leadership every morning, the cost may be justified. If the report is stale, duplicated, or unused, the compute should change.

Now compare that with an AI agent that runs after every support ticket. The cost is not only model calls. It may include retrieval, embeddings, tool calls, retries, human review, and logging. Compute governance should reveal whether the agent reduces support effort, improves routing, or simply adds invisible spend to every ticket. Without that view, teams confuse automation activity with operational value.

The same pattern applies to CI runners, scheduled exports, warehouse refreshes, container jobs, vector searches, and background automations. Each workload needs a reason to exist, a right-sized execution pattern, and a review trigger when usage drifts.

Governance tiers operators can use

A lean team does not need one policy for every workload. It needs tiers. Tier one can be experimental: small budget, expiration date, relaxed reliability, and clear owner. Tier two can be operational: recurring workload, monitored failures, basic budget alert, and outcome review. Tier three can be production-critical: protected priority, stronger alerting, rollback plan, approval for major changes, and executive visibility when spend or reliability changes.

These tiers prevent governance theater. Instead of asking every workload to go through the same process, teams match controls to risk. A low-cost experiment can move fast. A customer-facing workflow gets guardrails. A high-cost data pipeline gets cost and freshness review. A background agent gets retry and model-spend limits.

The category shift is that compute governance is becoming part of operating design. As AI agents, data workflows, and automation systems grow, compute is no longer a back-office cloud line item. It is one of the resources that determines whether the business can execute reliably and affordably.

Three use cases teams can borrow

First, data pipelines and reporting jobs. Teams should know which refreshes are production-critical, which are exploratory, which can run less often, and which need stronger failure alerts. Freshness matters, but not every query deserves premium compute.

Second, AI agents and automation workflows. Agents can create hidden usage through retries, tool calls, embeddings, scoring, and background loops. Compute governance should expose trigger volume, retry behavior, model spend, and owner review before scaling.

Third, cloud and container workloads. Kubernetes quotas, warehouse monitors, CI runner limits, and cloud budgets help teams avoid runaway usage. The key is connecting those controls to workflow ownership, not treating them as isolated technical settings.

Rules, automation, and human review

Rules are useful for obvious controls: no untagged production workloads, no ownerless jobs, no unlimited retries, no high-cost warehouse without budget approval, and no experimental job running on production priority. Automation is useful when those rules need to be enforced continuously.

Human review still matters when the tradeoff is real. A workload might be expensive but valuable. Another may be cheap but operationally risky. A third may be a temporary experiment that should expire automatically. Good governance routes exceptions to owners with context instead of blocking everything by default.

Public references such as resource controls and usage monitoring are useful, but the operating system matters most. Compute governance should help teams make better decisions, not merely produce another spend dashboard.

What breaks first in production

The first failure mode is ownerless compute. Jobs, warehouses, agents, queues, and runners keep spending because nobody knows who can turn them off.

The second failure mode is priority confusion. Low-value jobs compete with customer-facing workflows because all workloads look equal to the platform.

The third failure mode is cost-only governance. Teams cut spend without understanding which workloads protect revenue, customer experience, or operational trust. That creates fragility disguised as savings.

Rollout pattern

Start with one workload class: data jobs, AI agents, CI runs, cloud environments, or reporting refreshes. Define owner fields, budget boundaries, priority levels, monitoring expectations, and exception paths.

Then review real usage. Pull the top workloads by cost, runtime, retry volume, and business value. Ask whether each one has an owner, an outcome, a limit, and a review cadence. The first audit usually exposes more orphaned work than anyone expects.

Finally, connect governance to execution. Budget alerts should route to owners. Retry spikes should create workflow review. Low-value jobs should expire. Critical workloads should receive protected priority. That is how governance becomes useful instead of ceremonial.

Where Meshline fits

Meshline fits when compute governance examples needs to connect workload signals to owners, actions, and outcomes. Meshline is Autonomous Operations Infrastructure for trigger-to-outcome execution, ownership and control, and system-led execution.

Teams often pair this work with event routing console, automation data sync, and the data infrastructure glossary. The goal is to make compute governance operational: visible triggers, named owners, automated controls, and reviewable outcomes.

QA checklist before rollout

- Does every workload have an owner and business reason?

- Are production, experiment, data, AI, and reporting workloads classified differently?

- Are budgets, quotas, concurrency, retry, and rate limits visible?

- Can operators see cost, reliability, freshness, and outcome together?

- Do alerts route to the owner with context?

- Are stale workloads expired or reviewed?

- Can leadership distinguish savings from risky under-provisioning?

Final takeaway

compute governance examples works when compute becomes an owned operating resource instead of invisible spend. Start with one workload class, add owners and limits, review actual usage, and connect every control to a business outcome.